Tokenization for Positional Type Input File Using Migration.properties File

In this sample, using CT-V Bulk Utility, a positional type data file will be tokenized using migration.properties file.

Creating the Input Data File

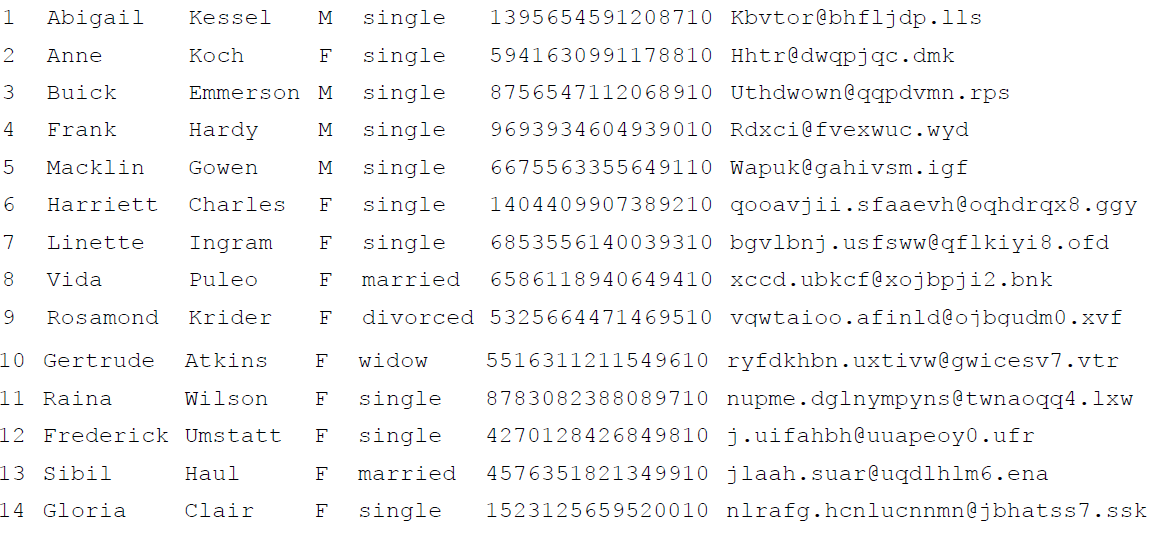

Below is the data that will be used to populate the customerTable_Positional.csv file:

Setting Parameters for Migration.properties File

Below is the parameters set for positional format input data file:

#####################

# Input Configuration

# Input.FilePath

# Input.Type

#####################

#

Input.FilePath = C:\\Desktop\\migration\\customerTable_Positional.csv

#

Input.Type = Positional

################################

# Positional Input Configuration

# Input.Column0.Start

# Input.Column0.End

# ...

# Input.ColumnN.Start

# Input.ColumnN.End

# Note: For positional input type, a tab will be considered as a single character.

################################

#

Input.Column0.Start = 0

Input.Column1.Start = 3

Input.Column2.Start = 15

Input.Column3.Start = 26

Input.Column4.Start = 28

Input.Column5.Start = 36

Input.Column6.Start = 53

#

#

Input.Column0.End = 2

Input.Column1.End = 13

Input.Column2.End = 25

Input.Column3.End = 27

Input.Column4.End = 36

Input.Column5.End = 52

Input.Column6.End = 83

###########################################

# Tokenization Configuration

# Tokenizer.Column0.TokenVault

# Tokenizer.Column0.CustomDataColumnIndex

# Tokenizer.Column0.TokenFormat

# Tokenizer.Column0.LuhnCheck

# ...

# Tokenizer.ColumnN.TokenVault

# Tokenizer.ColumnN.CustomDataColumnIndex

# Tokenizer.ColumnN.TokenFormat

# Tokenizer.ColumnN.LuhnCheck

############################################

#

Tokenizer.Column5.TokenVault = BTM

Tokenizer.Column6.TokenVault = BTM_2

#

Tokenizer.Column5.CustomDataColumnIndex = -1

Tokenizer.Column6.CustomDataColumnIndex = -1

#

Tokenizer.Column5.TokenFormat = LAST_FOUR_TOKEN

Tokenizer.Column6.TokenFormat = EMAIL_ADDRESS_TOKEN

#

Tokenizer.Column5.LuhnCheck = true

Tokenizer.Column6.LuhnCheck = false

######################

# Output Configuration

# Output.FilePath

# Output.Sequence

######################

#

Output.FilePath = C:\\Desktop\\migration\\tokenized.csv

# Set positive value for columns to be tokenized. For example column 5 and 6 have

# been set positive below, so now only these two columns will be tokenized.

Output.Sequence = 0,-1,-2,-3,-4,5,6

# TokenSeparator

#

# Specifies if the tokens are space separated or not.

# Note: This parameter is ignored if Input.Type is set to Delimited.

#

# Valid values

# true

# false

# Note: Default value is set to true.

TokenSeparator = true

#

# StreamInputData

#

# Specifies whether the input data is streamed or not.

#

# Valid values

# true

# false

# Note: Default value is set to false.

#

StreamInputData = false

Note: If StreamInputData is set to true, the TokenSeparator parameter is not considered.

#

# CodePageUsed

#

# Specifies the code page in use.

# Used with EBCDIC character set for ex. use "ibm500" for EBCDIC International

# https://docs.oracle.com/javase/7/docs/api/java/nio/charset/Charset.html

#

CodePageUsed =

Note: If no value is specified, by default, ASCII character set is used.

#

# FailureThreshold

#

# Specifies the number of errors after which the Bulk Utility aborts the

# tokenization operation.

# Valide values

# -1 = Ttokenization continues irrespective of number of errors during the

# operation. This is the default value.

# 0 = Bulk utility aborts the operation on occurance of any error.

# Any positive value = Indicates the failure threshold, after which the Bulk

# Utility aborts the operation.

#

# Note: If no value or a negative value is specified, Bulk Utility will continue

# irrespective of number of errors.

#

FailureThreshold = -1

###############################################################################

# END

###############################################################################

Running CipherTrust Vaulted Tokenization Bulk Utility

Enter the following command to encrypt with CT-V Bulk Utility in a Windows environment:

java -cp SafeNetTokenService-8.12.3.000.jar com.safenet.token.migration.main migration.properties –t DSU=NAE_User1 DSP=qwerty12345 DBU=DB_User1 DBP=abcd1234

Reviewing the Output File

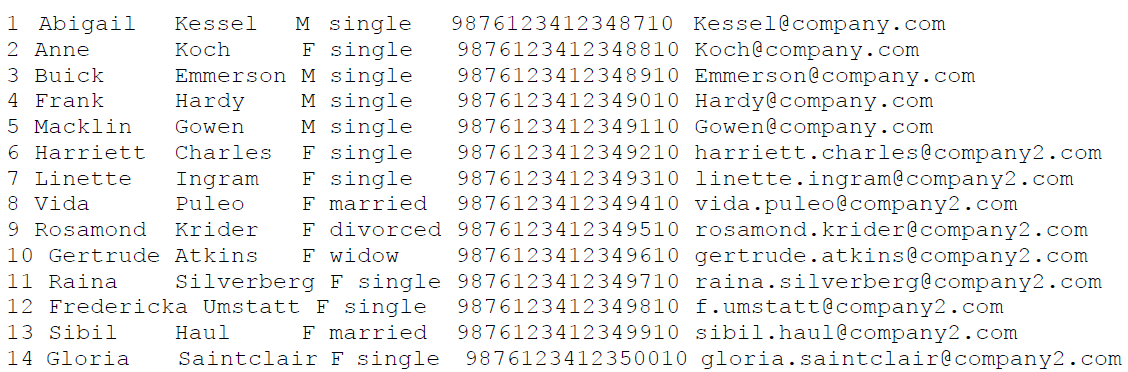

The output data file is saved at the same path mentioned in the migration.properties file with the same name tokenized.csv. As per the output sequence parameter, only column 5 and 6 are tokenized.

Here is the data from the output file: